{{item.title}}

{{item.text}}

{{item.text}}

Challenges keep multiplying in today’s complex world. Depending on how you choose to address them, your decisions can either limit or create new opportunities.

Our teams of solvers seamlessly blend their real-world experience with industry leading technologies to help address your unique problems, deliver powerful outcomes and position you for the future.

{{item.text}}

{{item.text}}

{{item.text}}

{{item.text}}

{{item.text}}

{{item.text}}

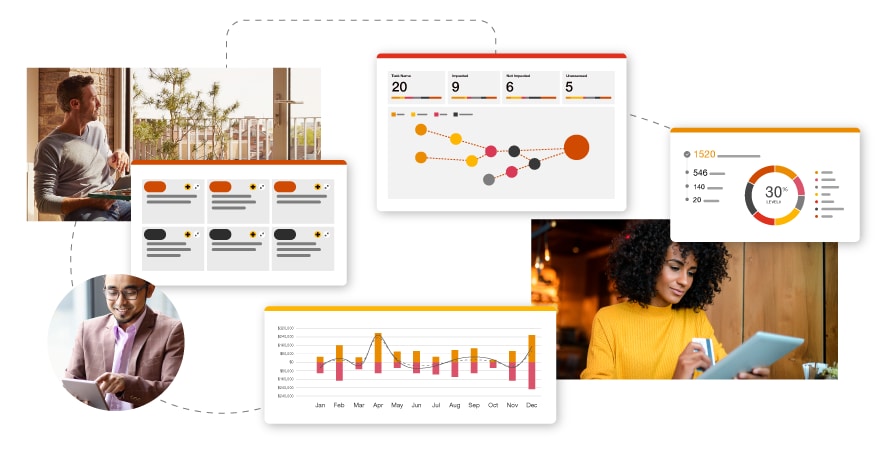

Transform your business with PwC’s innovative tech offerings for today’s challenges.

Organizations are dealing with myriad challenges due to rapidly changing technology and systems environment. The Internet of Things (IoT) is a hot topic, but it's not until an organization is able to take advantage of the insights it can provide that investments can yield results organizations want and need. Connected Solutions is a patented IoT platform that can help accelerate productivity, drive cost savings and keep your physical spaces safe— all while helping you meet vital ESG targets.

As customer expectations constantly evolve, companies increasingly turn to the cloud for help. Even so, many organizations struggle to realize the full value of their cloud investments or achieve their desired business results. PwC's Industry Cloud solutions are tech-enabled services that combine deep industry knowledge with pre-built, industry-specific digital assets, leveraging leading cloud platforms and integrating with top technologies to help accelerate your digital transformation.

Amid change and complexity, tax professionals need a more dynamic solution—one that can position them for growth, help them become more agile and allow for the strategic use of time and resources. Beacon enables multinational organizations to reimagine complex tax calculations across various stages of the tax lifecycle, for both Pillar Two and US international tax. Streamline tax modeling and reporting with our powerful, dynamic tools that use graph theory.

Businesses today struggle with managing compliance amid growing regulations, evolving risks, outdated systems, manual processes and cost reduction pressures. Risk Link provides a transformative solution. Powered by generative AI, it creates a connected risk ecosystem that maps regulations to controls, generates control language, parses regulatory updates, flags areas for remediation and streamlines task management. Risk Link empowers businesses to navigate compliance risk more effectively.

Economic forces are pushing companies to find efficiencies— especially for accounting and bookkeeping. With tight deadlines and a dependence on accurate data, the demand to do more with less is imperative. Bookkeeping Connect brings together industry-leading technology and experienced PwC professionals to help automate processes and simplify workflows. By providing timely and accurate bookkeeping and accounting, it can help establish a solid foundation of trustworthy data, enabling businesses to make informed decisions more efficiently.

Organizations continually face workforce pressures and struggle with understanding how their people decisions stack up against the market. Frequently, they don't know how they compare in areas such as spans of control, labor costs, turnover, D&I, staffing efficiencies, and HR costs and structures. Saratoga Benchmarking helps you compare and assess your workforce metrics against the market and your industry peers. Gain deep insights into people strategy to help you evaluate and improve current processes and programs.

As models used by organizations in the age of AI become more complex and impactful, the demand for responsible and transparent model management becomes essential to meet critical business needs. Model Edge helps address them by providing model risk management, validation and orchestration capabilities. With Model Edge, companies can effectively navigate the complexities of model management, promoting transparency and responsible decision-making while maintaining trust and accountability.

Here’s an example of how other companies benefited from Listen Platform

Case study

Hyatt worked with PwC to create an ongoing listening strategy by leveraging the capabilities of our Listen Platform.

“PwC Connected Solutions has evolved in recent years to encompass an unexpectedly — for a Big Four professional services firm — broad set of offerings, ranging from sensors and hardware to network and cloud to dashboard analytics and reporting.”

“In becoming more proactive in preparing and adapting to risks, clients can become more resilient. With the right insight, they can mitigate and prepare for whatever change is ahead. PwC helps them create a panoramic view of their unique risk landscape, so they can act boldly and purposefully.”

2024 Risk.net Technology Award